ChatGPT is not a classroom tool. ChatGPT is a data-gathering platform designed to train and test a large language model AI developed by OpenAI.

Most of what people are saying about ChatGPT ignores all of the reasons it should not be in the classroom in its current iteration.

If you work in a K-12 environment, you understand that time is currency. Time is a factor in scheduling, professional development, holidays, breaks, and planning. If you want to waste time, focus on the current ChatGPT. The near future of AI will be better for the classroom, will likely not need prompts, and will require the user to build the relationship over time to be effective.

The Terms of Service prohibit the use of ChatGPT for most students

How many services and websites are your K-12 school using that openly declare they are for 18 and over (18+)?

The answer is none. In some countries, this would likely get you deported immediately. You would open the school to a lawsuit in places like the USA.

The OpenAI Terms of Service state:

You must be at least 13 years old to use the Services. If you are under 18 you must have your parent or legal guardian’s permission to use the Services. If you use the Services on behalf of another person or entity, you must have the authority to accept the Terms on their behalf. You must provide accurate and complete information to register for an account. You may not make your access credentials or account available to others outside your organization, and you are responsible for all activities that occur using your credentials.

These terms were updated in the last few weeks. Educators around the world read into these terms what they wanted: ChatGPT is legal for use in K-12 education. Read the terms again.

In places like the USA, the only students who can use ChatGPT must be over 13 and have parental consent. The consent letter needs to state that the parents are allowing the students to enter into a contract with OpenAI.

What other platforms or services do we do this for? In five countries and over 17 years working in educational technology, the answer is none.

The IBO recently created some guidelines for the use of the service. The IBO did not consider that these terms likely will not apply to the numerous IB schools in China, much of Asia, and the Middle East. This seems like one group of students will have a green light to use a powerful tool, and others will not. In the early 2000s, we called this a digital divide.

Many pundits have made the same mistake after spending days or weeks writing ebooks that ignore the 18+ restriction in the classroom. They are banking on sending contracts home to parents. What are parents opt-out? How does a class function when differentiation creates a resource gap?

ChatGPT owns your data and your data is not private

I am an extensive ChatGPT user. I use it nearly every day to work on coding. I also create experiments and have open conversations with the AI about itself. Suppose a user has not interacted with the system as another intelligent being. In that case, they need help understanding how to use it. It is not a search engine and often needs to be more accurate. The system is training and learning at this stage. (My conversations, webinars, etc., on this topic can be found here if you are curious.)

Any power user of the platform is going to use the client software. The software must be downloaded and installed. Because the software goes onto your equipment, you receive the customary notices. Here is one I believe everyone should be aware of:

As you enter data into the system, it enters the large language model training dataset. All the chatbot sessions around the world have access to this data. The workflow removes personal user information. Attributing data (work) back to the owner (user) is impossible.

Think about a person with perfect memory writing down everything they hear, but they cannot see who says it.

Imagine I am a student in Brazil. I am writing an IBDP paper on the impact of agriculture in Mesoamerica in 1400 BCE. Within a few months, a student in Germany works on the same topic for the IBDP program.

A likely outcome will be a similar paper. This is not plagiarism. It is something else.

Policies allowing this type of work must exist before schools allow technology implementation. In effect, the new policy structure would eliminate the requirement that students own the entirety of their submitted work.

These issues are not limited to writing words and phases. The AI will be able to group people, like IB moderators. It is the most efficient way to build an understanding and how the AI is built- in layers of grouped connections. If an IB moderated uses the AI, the AI will learn to write how the moderator class of people prefers. Students will have their results influenced by these patterns. Therefore, institutions like the IBO also need policies around the AI tools they plan to implement.

Schools need to redefine the concept of student work before this type of technology is deployed.

I have heard people compare systems like ChatGPT to conversations at a party. This is different from chatting to people about an idea at a party. This is like asking everyone synchronously at every party in the world about an idea they have already implemented, recording the feedback, streamlining the concept, and producing a better result each time the process is repeated.

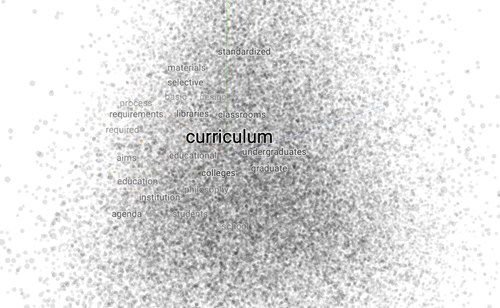

Here is how the term “curriculum” clusters around terms. You can experiment with how these types of models work using this resource: https://projector.tensorflow.org/

The ownership issues become more interesting when you realize that AI can generate artwork. DALL-E 2 can create artwork from ideas and text.

However, that artwork is not owned by the user (student). Recently the US Copyright and Trademark Office ruled on this, and I have also discussed this with the AI regarding terms of ownership.

Anyone opening the door to allow AI-generated essay content must contend with AI-generated art, music, deep fake videos, etc.

Schools, and even the IBO, need to be equipped to deal with the nuance of this content. If you open the door for one, you have opened the floodgate for all.

Teachers, your content is at risk of being reviewed by parents

New technology is dynamic, and it is not easy to control. Classroom technology scores high in the areas of confidentiality, the integrity of data, and availability (can everyone use it equally and consistently).

Technology ready for a teacher to use with students does not come with an 18+ label.

The consequences of using new technology need to be considered along with the hype.

For example, you allow mobile phones in the classroom. In that case, you enable teachers to be recorded, and lessons posted online.

Students will need their parents to register and buy a license for this version of ChatGPT to be used at scale and effectively. The free version has limitations and is often only available sometimes. Parents will realize they can take classroom content and load it in various AI instances to see what type of value they are getting for their tuition.

Putting the reality of educational outcomes aside, most parents paying tuition would react negatively if they found:

- Content from resources like Teachers-Pay-Teachers

- Content that has been recycled year after year without revision

- Content with glaring subject matter mistakes (parents will use the AI as a subject matter expert)

- Comments and other formative information that was not generated by the teacher

In the open market, as an AI developer, I would be selling to parents. It is a new frontier for them as well as education.

The best move you can make in 2023 with ChatGPT as a school

I use these AI tools nearly every day. I am working on learning how to implement them within systems to assist with coding and data analytics. This type of work suits the AI, does not involve sensitive data and meets the terms of service.

If your plans for AI do not follow a legal pathway or create confusion with policies and procedures, your goals will fail.

The cost of that failure will be the community not wanting to use the next version of these tools suitable for the classroom. That valuable asset, time, will be wasted on training people on a system that is literally in training and soon to be replaced.

Planning for the future, I have confirmed with the AI that prompts are not needed. Prompts are designed to help people ask better questions. Prompts, at least the published ones, are optional to achieve any result, according to ChatGPT.

Imagine training a hundred people to use prompts; the next version of the AI avoids using prompts. This outcome is likely. Many of the ebooks I have seen are pushing prompts as the key to success.

The AI, based-on data we can see on our client computers, is developing a history with the user. The AI will likely follow the path of least resistance and develop a relationship based on language formality, everyday business use, and shared topics.

Here is an example of a person working in cyber security:

First Time with the request:

Person: I need a SOC 2 report for my manager and the executive team; limit it to [number of words]; the language needs to be moderately technical for a professional audience

AI: Creates the output

Fifth Time with the request:

Person: Please create a SOC 2 report, standard format.

Ai: Create the output

While working with AI as a person/or intelligence will create efficiency. The AI will learn what you require and ask for more information if needed.

Learning to be precise with your language and having a deep understanding of your subject matter creates valuable AI output. AI is a reflection of a person's knowledge and growth.

Right now, in 2023, what a school or teacher needs to do is wait. Set up a team of people to use the AI as a software client and pay for a few subscriptions.

The team should test other AI products and instances, not just the popular ones.

The school should develop a legal framework for using AI in the classroom or business office. They should also create policies and procedures and open them for public comment within the organization.

Finally, simulate parents using AI tools to vette classroom materials and content.

My advice in a sentence:

Get ready and be excited, but use caution and move with purpose.

- - - - - - - -

** Not registered yet? Create your free profile here and add a response below **

To share your story, thoughts or ideas with the ISN community, please send your article draft directly to our editorial team here, or email us at [email protected]